Applications are closed but you can express your interest in this course, by using the form above. We will contact you when the applications re-open.

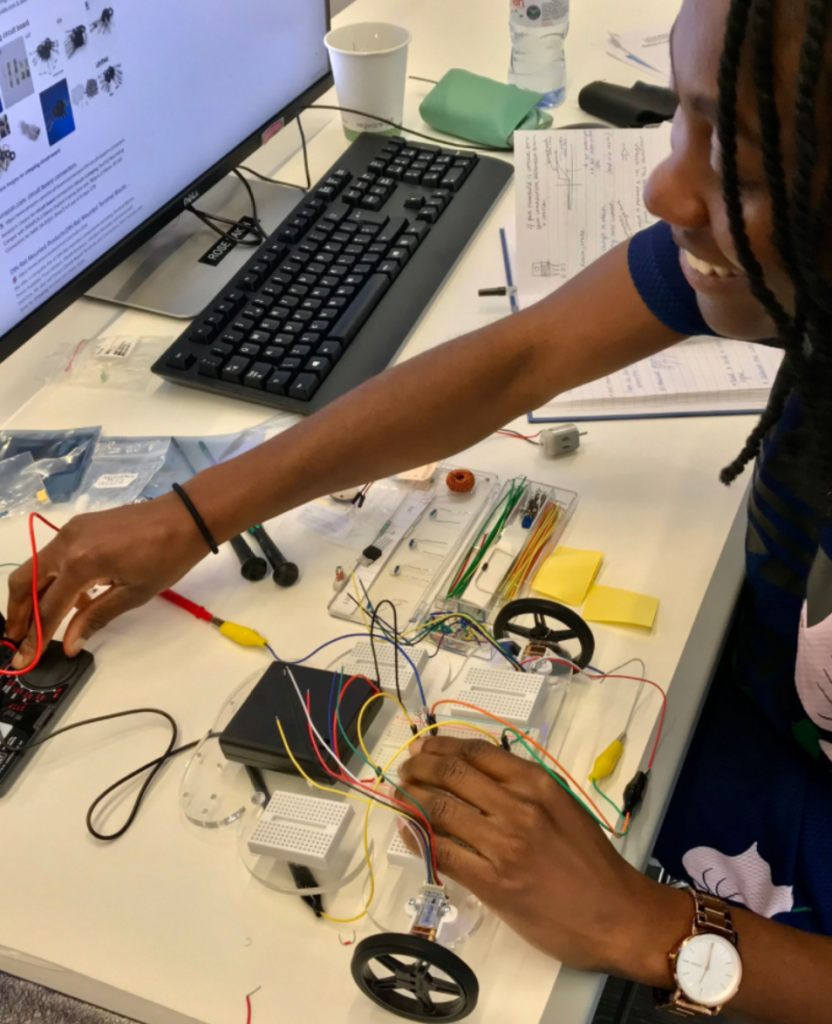

This is a Cajal NeuroKit course that combines online lectures on fundamentals and advanced neuroscience topics with hands-on and physical experiments. Researchers can participate from anywhere in the world because the course material is shipped to participants in a kit box that contains all the tools needed to follow the online course.

Course overview

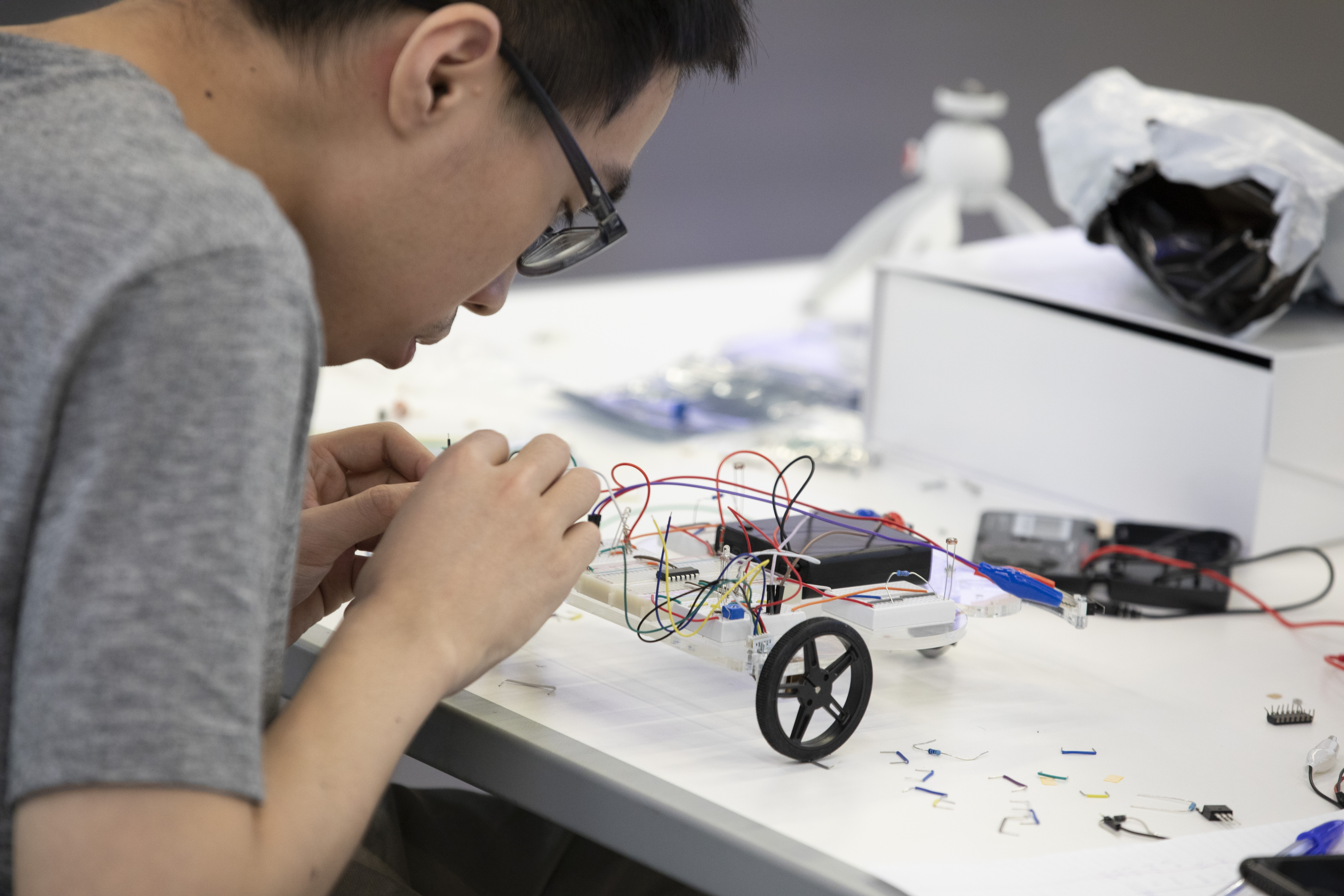

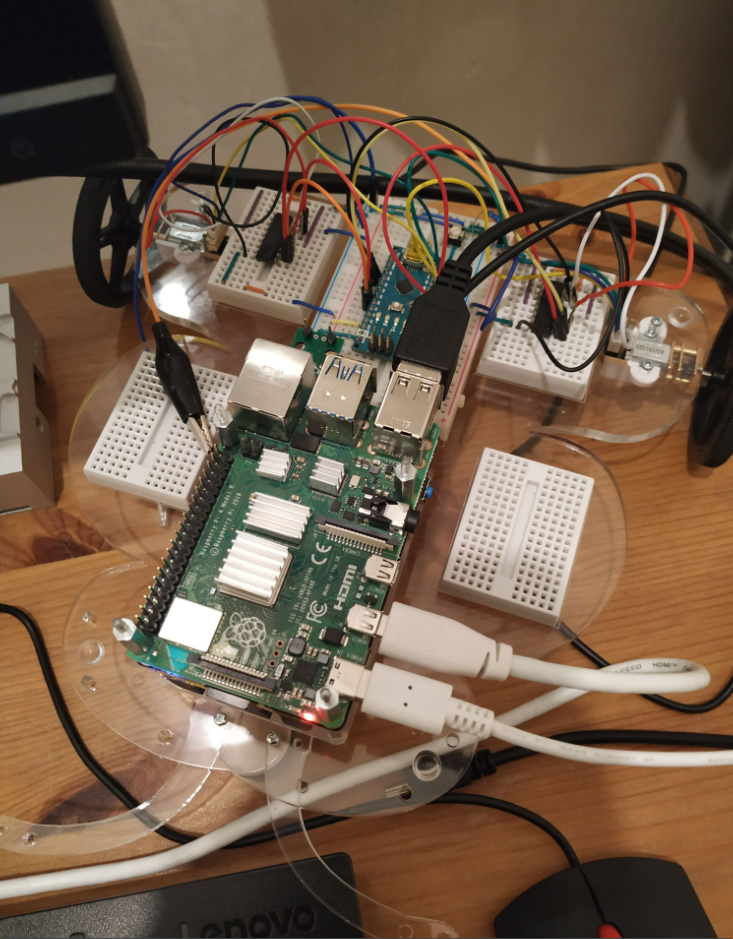

This course provides a fundamental foundation in the modern techniques of experimental neuroscience. It introduces the essentials of sensors, motor control, microcontrollers, programming, data analysis, and machine learning by guiding students through the “hands on” construction of an increasingly capable robot.

In parallel, related concepts in neuroscience are introduced as nature’s solution to the challenges students encounter while designing and building their own intelligent system.

What will you learn?

The techniques of experimental neuroscience advance at an incredible pace. They incorporate developments from many different fields, requiring new researchers to acquire a broad range of skills and expertise (from building electronic hardware to designing optical systems to training deep neural networks). This overwhelming task encourages students to move quickly, but often by skipping over some essential underlying knowledge.

This course was designed to fill-in these knowledge gaps.

By building a robot, you will learn both how the individual technologies work and how to combine them together into a complete system. It is this broad-but-integrated understanding of modern technology that will help students of this course design novel state-of-the-art neuroscience experiments.

Course directors

Adam Kampff

Course Director

Voight Kampff, London, UK

Andreas Kist

Course Director

Department for Artificial Intelligence in Biomedical Engineering (AIBE), Erlangen, Germany

Elena Dreosti

Co-Director

University College London, UK

Programme

Day 1 – Sensors and Motors

What will you learn?

You will learn the basics of analog and digital electronics by building circuits for sensing the environment and controlling movement. These circuits will be used to construct the foundation of your course robot; a Braitenberg Vehicle that uses simple “algorithms” to generate surprisingly complex behaviour.

Topics and Tasks:

Electronics (voltage, resistors, Ohm’s law): Build a voltage divider

Sensing (light-dependent resistors, thermistors): Build a light/temperature sensor

Movement (electro-magentism, DC motors, gears): Mount and spin your motors

Amplifying (transistors, op-amps): Build a light-controlled motor

Basic Behaviour: Build a Braitenberg Vehicle

Day 2: Microcontrollers and Programming

What will you learn?

You will learn how simple digital circuits (logic gates, memory registers, etc.) can be assembled into a (programmable) computer. You will then attach a microcontroller to your course robot, connect it to sensors and motors, and begin to write programs that extend your robot’s behavioural ability.

Topics and Tasks:

-

Logic and Memory: Build a logic circuit and a flip-flop

-

Processors: Setup a microcontroller and attach inputs and outputs

-

Programming: Program a microcontroller (control flow, timers, digital IO, analog IO)

-

Intermediate behaviour: Design a state machine to control your course robot

Day 3: Computers and Programming

What will you learn?

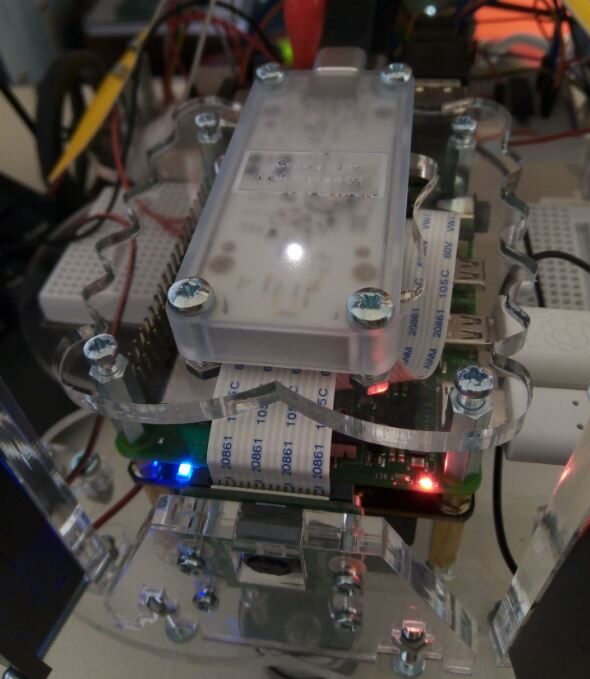

You will learn how a modern computer’s “operating system” (Linux) coordinates the execution of internal and external tasks, and how to communicate over a network (using WiFi). You will then use Python to write a “remote-control” system for your course robot by developing your own communication protocol between your robot’s linux computer and microcontroller.

Topics and Tasks:

-

Operating Systems: Setup a Linux computer (Raspberry Pi)

-

Networking: Remotely access a computer (SSH via WiFi)

-

Programming: Program a Linux computer (Python)

-

Advanced behaviour: Build a remote control robot

Day 4: (Machine) Vision

What will you learn?

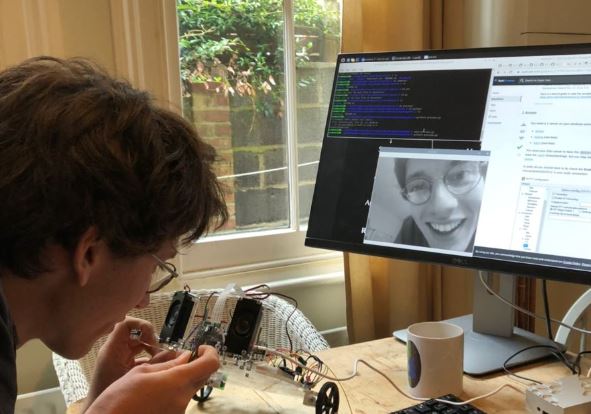

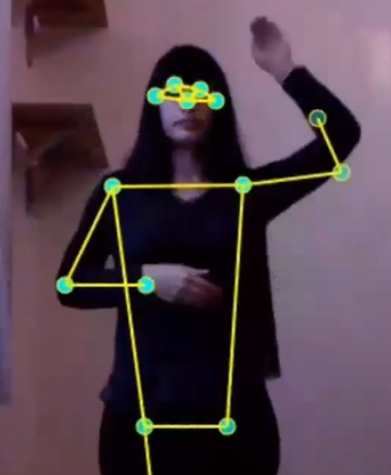

You will learn how grayscale and color images emerge and how to work with them in a Python environment. By mounting a camera on your robot, you can live-stream the images to your computer. You will then use background subtraction and thresholding to program an image-based motion detector. You will use image moments to detect and follow a moving light source, and learn about “classical” face detection.

Topics and Tasks:

-

Images: Open, modify, and save images

-

Camera: Attach and stream a camera image

-

Image processing: Determine differences in images

-

Pattern recognition: Extract features from images

Day 5: (Machine) Learning

What will you learn?

You will learn about modern deep neural networks and how they are applied in image processing. You will extend the intelligence for your robot, by adding a neural accelerator to the robot. We will deploy a deep neural network for face detection and compare it to the “classical” face detector. Ultimately, you will create and train your own deep neural network that will allow your robot to identify it’s creator, you.

Topics and Tasks:

-

Inference: Implement a neural accelerator (Google Coral USB EdgeTPU)

-

Deployment: Deploy and run a deep neural network

-

Object detection: Finding faces using a deep neural network (Single Shot Detector)

-

Object classification: Train a deep neural network to identify one’s own face (TF/Keras)

The course will be held from 14:00 to 18:00 CET

Registration

Registration fee: 500€ per person (includes shipping of the course kit, pre-recorded and live lectures before and during the course, full attendance to the course, and course certificate).

Registration fee for a group: 500€ for one person and one course kit + 150€ per additional person (without the course kit)

Applications are closed but you can express your interest in this course, by using the form above. We will contact you when the applications re-open.

To receive more information about this NeuroKit, email info@cajal-training.org